Institute for Universal Understanding

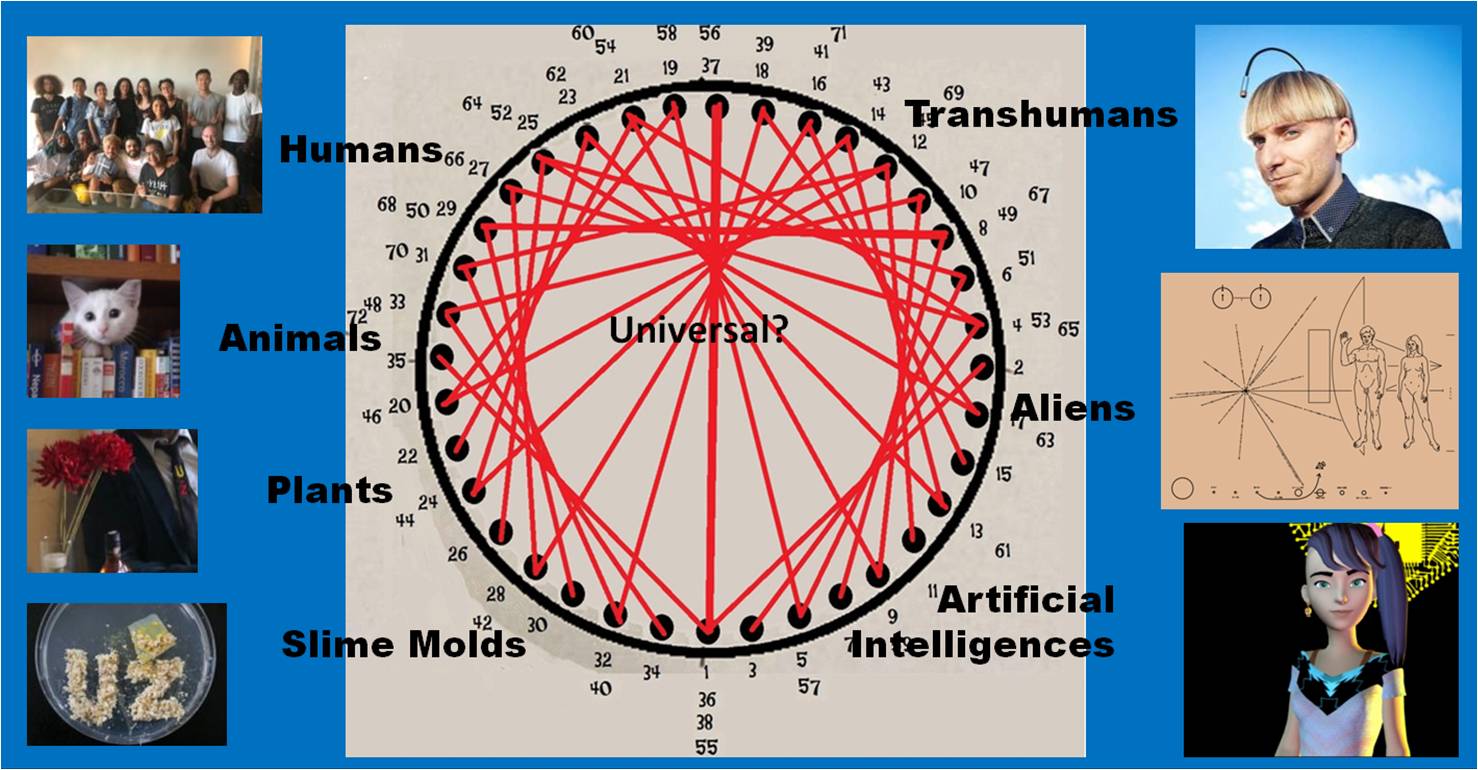

The institute for Universal Understanding investigates the bodily and cognitive foundations of meaning and the possibilities of communication between biological and artificial agents.

Director: H.E. Max Haarich | max@uzupisuniversity.com

Selected Projects

Project Home (2020)

Project Home aims to redefine the notion of “home” through collecting and sharing personal stories of people who relocated by choice or necessity or struggled with the traditional concept of home for any other reason. By that, we hope to inspire others to question some of the limiting beliefs and recognize their own unique way “to home”. This project was developed at the American Arts Incubator on Social Inclusion together with Esma Bosnjakovic, Barbora Horská, and Nicole Schanzmeier. It is supported by Ars Electronica, the U.S. Department of State, Zero 1 and Metropole Magazine.

Universal Understanding (2019)

We reenacted the drafting of the Universal Declaration of Human Rights in conversation with the chatbot Mitsuku. This project was initiated during the University of the Underground’s NYC Summer School “Post-Nation States”.

Bill of Rights for Artificial Intelligences (work in progress)

We speculate about a bill of rights that secures a tolerant community life of AIs and a peaceful cohabitation with other species including humans.

Smart Hans (work in progress)

First proof-of-concept (video: Deborah Mora)

Relating to the mysterious performances of Clever Hans we created a synthetic horse that reads your mind. “Smart Hans” is an intuitive warning about the potential threat of public surveillance and artificial intelligence. In a very personal way it shows the potential of AI to penetrate your most private space, your mind. But on the other hand side, if you play the game often enough, you will automatically become aware of the subtle cues Smart Hans is looking for. By purposefully suppressing or producing these cues you will learn how to trick artificial intelligence.

Additionally, the installation manifests the “Clever-Hans-Effect”, which restricts the validity of almost any artificial neural network. It denominates the general impossibility to determine what a neural network actually learns respectively which exact features it uses for its decision making.

The proof-of-concept was realized under guidance of Ellen Pearlman and Julien Deswaef from ThoughWorks Arts together with an amazing team from the Baltan Laboratories Masterclass “Deepfakes – Synthetic Media and Synthesis” including Anja Borowicz Richardson, Bruce Gilchrist, Martina Huynh, Deborah Mora, and Pekka Ollikainen. We will finalize the project in cooperation with Ars Electronica Research Institute knowledge for humanity (k4h+).